Sound design in itself is super intriguing, that’s a fact, but throwing AI (artificial intelligence) into the mix can bring things to a whole new level.

As producers, knowing all the best AI-enhanced sound design tips, tricks, and techniques can seriously enhance your tracks.

Plus help you unlock new creative possibilities, automate the boring stuff, and reshape how you design sound entirely.

That’s why I’m breaking down the absolute best AI-enhanced sound design techniques, like:

- Morphing sounds with AI latent space ✓

- Creating textures from text prompts for various applications ✓

- Extracting pitch and noise separately ✓

- AI-generated ambient soundscapes ✓

- Convolution reverb from raw samples ✓

- How to enhance audio with the help of AI ✓

- Vocal-style modulations on synths ✓

- Granular design driven by language ✓

- Resynthesizing analog gear tones ✓

- Eliminating background noise ✓

- Much more about AI-enhanced sound design tips & techniques ✓

By the end, you’ll know all about how to use AI to design some of the most advanced sounds possible.

Plus you’ll be able to create music, shape unique textures, enhance audio, and edit like an absolute boss.

This way your tracks will stand out from the crowd, and you’ll finally know how to have AI handle all the boring, tedious tasks for you.

I’m telling you, the future of music production includes AI technology, so trust me, you want to get on board so you’re not left to drown.

Table of Contents

- 15+ AI-Enhanced Sound Design Tips, Tricks, and Techniques

- #1. Enhance Your Tracks with AI-Driven FX Chains

- 2. Use Latent Space Morphing To Generate Hybrid Timbres

- 3. Translate Natural Language Into Granular Synthesis Maps

- 4. Extract Pitch And Noise Layers With AI Separation

- 5. Evolve A Single Sound Using AI-Based Timbral Prediction

- 6. 3D Panning Curves Using AI Spatial Prediction

- 7. Apply AI Gesture Mapping For Real-Time Sound Control

- 8. Generate Textures With AI-Powered Diffusion Audio Models

- 9. Use AI To Create Dynamic Convolution Reverb IRs

- 10. Model Vocal-Like Expressions In Non-Vocal Sounds

- 11. Build Fractal Audio Textures From Harmonic Analysis

- 12. Let AI Detect Sweet Spots In Complex Sound Chains

- 13. Apply AI-Driven Macro Mapping For Creative FX Control

- 14. Resynthesize Vintage Hardware Tone With AI Modeling

- 15. Use AI To Automatically Loop Ambient Beds

- 16. Transfer Sonic Identity From One Sound To Another

- 17. Map Sonic Gestures To MIDI With AI Audio Classification

- Final Thoughts

15+ AI-Enhanced Sound Design Tips, Tricks, and Techniques

So, now that you know how AI-enhanced sound design can completely enhance your skills and beats, let’s get into the good stuff. These tips are all hands-on, forward-thinking, and will help you create, edit, and enhance audio in ways that feel fresh, powerful, and way more intuitive.

#1. Enhance Your Tracks with AI-Driven FX Chains

Unison Audio’s Sound Doctor is an innovative plugin designed to generate professional-quality FX chains tweaked to your specific style.

By using AI algorithms, Sound Doctor analyzes your input and suggests FX chains that can transform ordinary sounds into captivating elements within your mix.

To do this, you simply:

- Load Sound Doctor onto Your Track: Insert the plugin on the audio track you wish to enhance. This could be anything from a vocal line to a drum loop.

- Select a Style or Genre: Sound Doctor offers various style-specific presets. Choose one that aligns with the vibe you’re aiming for.

- Generate an FX Chain: With a single click, Sound Doctor will create a custom FX chain based on your selected style. The AI considers factors like tempo, key, and the sonic characteristics of the input to create the perfect chain.

- Fine-Tune Parameters: While the generated FX chain is ready to use, you can adjust individual parameters to better fit your mix. Tweak settings like reverb decay time, delay feedback, or distortion intensity to achieve the desired effect.

Let’s say you have a dry synth lead that lacks character…

By applying Sound Doctor with a “Synthwave” preset, it might generate an FX chain comprising a chorus effect to widen the sound.

As well as tape saturation to add warmth, and a reverb with a 2.5-second decay to create depth (whatever sounds perfect, it will add).

Adjusting the saturation drive to 4.5 dB and setting the reverb mix to 40% can perfect the effect even more.

This not only speeds up the sound design process but also introduces you to FX combinations you might not have considered, expanding your creative possibilities.

2. Use Latent Space Morphing To Generate Hybrid Timbres

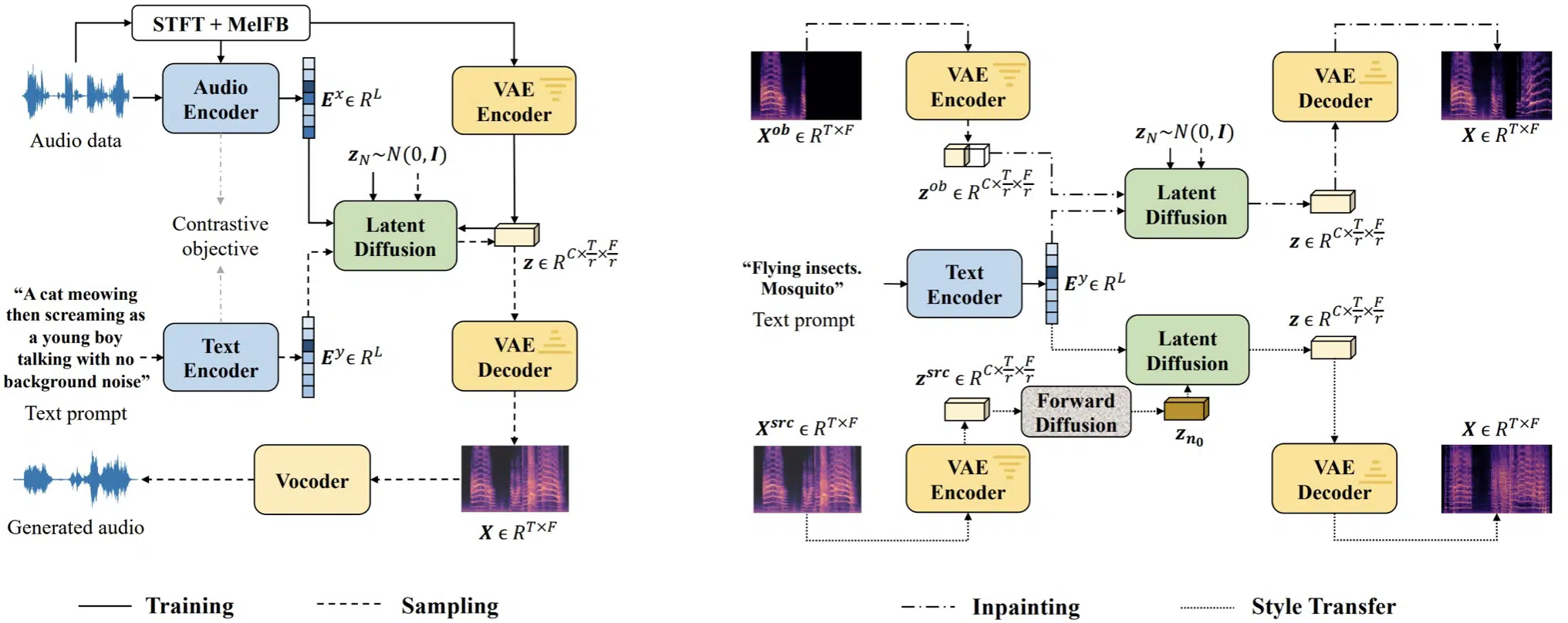

Latent space morphing is when an AI model blends the hidden characteristics of two sounds by moving through a “latent space.”

If you’re not sure, latent space is basically a digital map of how the AI understands different audio textures.

It’s like how a sub bass relates to a synth lead in terms of timbre, dynamics, and harmonic structure.

Instead of just crossfading, it creates brand-new sound combinations with unique tone and motion like turning a distorted 808 into something that gradually transforms into a gliding vocal pad with shimmer and bite.

You can use tools like RAVE or DDSP to morph between an 808 and a vocal pad by interpolating values between 0.2 and 0.8 for smoother results.

This type of AI-enhanced sound design helps you create hybrid sound effects, leads, or ambient textures that feel completely original.

3. Translate Natural Language Into Granular Synthesis Maps

Some AI tools now let you type a short phrase like “glassy shimmer with underwater decay” and turn that into an actual granular sound design patch.

This works using machine learning models that map text input to audio features like:

- Grain size (e.g. 60–120ms)

- Density (light or heavy)

- Pitch movement (±300 cents)

Then, apply them to a generated audio texture.

AI-enhanced sound design platforms like AudioLDM and Google’s Soundstorm are leading the way with these kinds of AI-generated music prompts.

So you can use them to, for instance, type hollow metallic pulse with reverb tail” and get a 10-second ambient layer ready to drop into your intro.

It’s one of the fastest ways to turn abstract inspiration into real, usable sounds for your tracks or soundtracks.

4. Extract Pitch And Noise Layers With AI Separation

You can use AI-powered source separation models like Demucs to split a sound into its tonal (pitch-based) and noise components.

This is super useful in AI-enhanced sound design when you want to isolate a melodic element from messy field recordings.

Or, just remove background noise/remove noise from raw samples.

For example, if you’re working with a gritty field recording, you can isolate the melodic bird chirps from the wind and remove background noise or push it for extra texture.

Side note, if you want access to the best free foley sounds, I got you covered.

Once separated, you can process each layer differently, for example, EQ the tonal content while distorting the background noise for texture.

You can try boosting the tonal layer around 2.5kHz with a narrow EQ Q of 4, while distorting the background noise layer using a soft clipper or bitcrusher at 20% mix.

This gives sound designers (and creative music producers) way more control over how each part of a sample evolves inside your mix.

5. Evolve A Single Sound Using AI-Based Timbral Prediction

AI timbral prediction tools learn how a sound should change over time, then help you generate smooth, evolving versions automatically.

For example, you could input a dry pad holding a single C note and have the AI add slow harmonic modulation, brightness shifts, and formant movement across 16 bars.

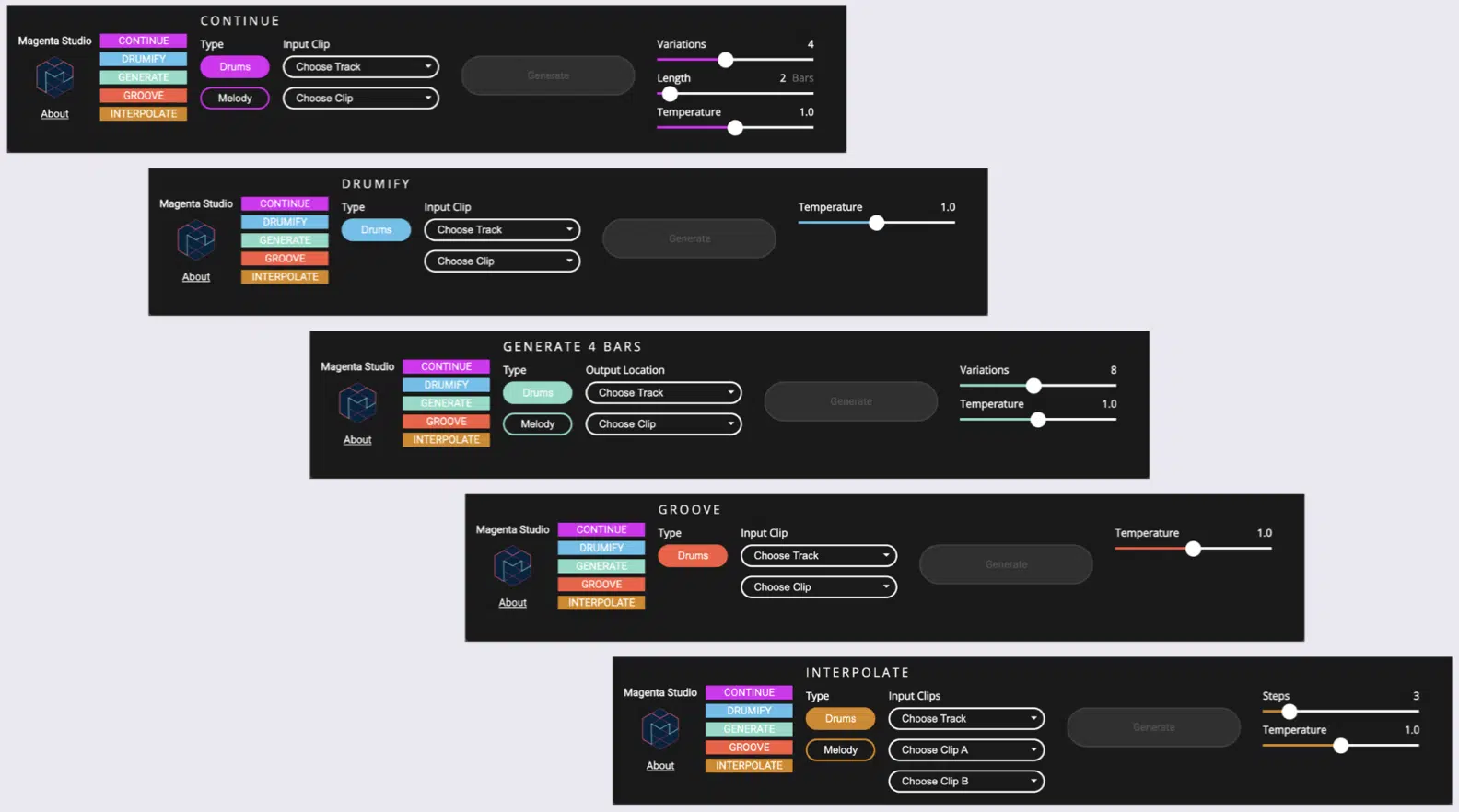

Plugins like Magenta Studio or DDSP like we talked about let you feed in a static pad and generate a 16-bar morphing version with no looping needed.

Try setting DDSP’s reverb mix to 0.4 and using a vibrato depth of 0.3 for subtle expression across long ambient tails.

This is great for creating (without tons of automation):

- Soundscapes

- Ambient builds

- Cinematic soundtracks

When it comes to AI-enhanced sound design, the key is to really knock people’s socks off and play around with some seriously intriguing sounds.

Even small parameter changes (like modulating brightness by ±12% over 8 bars) can create emotional movement without it ever sounding like it’s repeating.

This is especially true when it comes to movement without repetition.

6. 3D Panning Curves Using AI Spatial Prediction

Spatial prediction AI models can analyze the frequency, attack, and movement of a sound, then auto-create complex 3D panning curves that move elements naturally across a stereo or binaural field.

You can use AI-enhanced sound design tools like Envelop for Live or dearVR SPATIAL CONNECT to use neural mapping to place transient-heavy sounds (like snares) slightly higher and right.

While ambient elements (like risers) glide left-to-back over a 3-second fade.

Try setting panning automation resolution to 96 ppq and modulating width between 50–100% for more realism.

For sound engineers and music producers focused on depth and high-quality audio, this is one of the most overlooked yet powerful AI-enhanced sound design tools available.

Remember, it’s all about that perfect balance, so you don’t want anything sounding out of place or OD.

For sound design in AI-generated music, horror textures, or experimental tracks, this cutting-edge technique adds an intense layer of evolving depth, energy, and audio quality.

7. Apply AI Gesture Mapping For Real-Time Sound Control

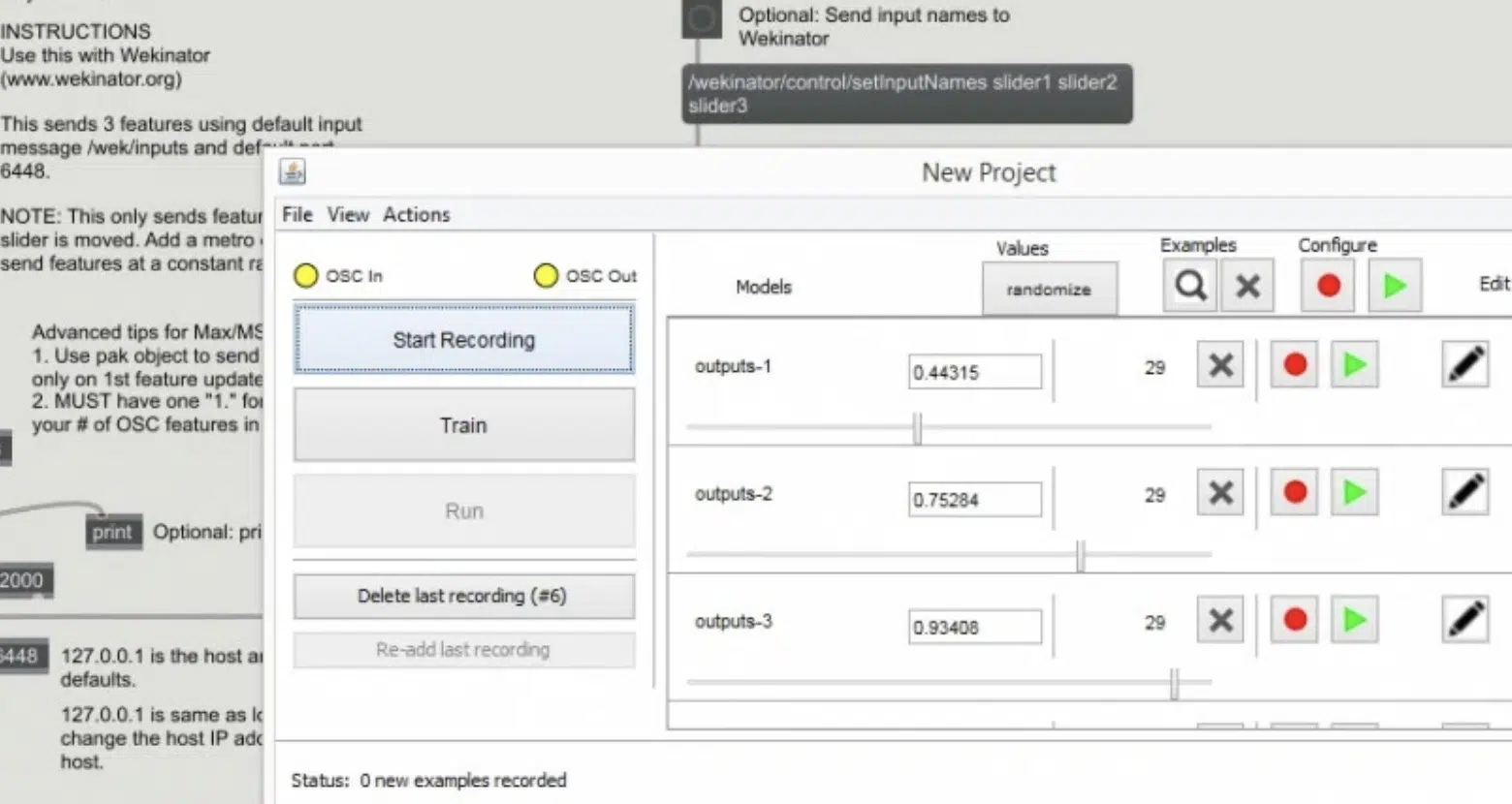

With AI gesture mapping, you can control synths or FX just by moving your hands or face in front of a webcam (it’s actually super sick).

For example, raising your hand vertically could increase filter cutoff from 200Hz to 3.2kHz, while tilting your head could adjust delay feedback from 25% to 60%.

All without touching a controller, which is a big plus.

Tools like Wekinator or Google’s Mediapipe track body movement and map it to MIDI or plugin parameters in real-time.

And, you can even set up smoothing curves to keep it musical using a 0.3–0.5s response window.

Imagine modulating filter cutoff with your left hand while controlling reverb decay with your right with no knobs required, well that’s what you can get.

This makes AI-enhanced sound design way more expressive 一 letting sound designers inject physicality into otherwise static digital environments.

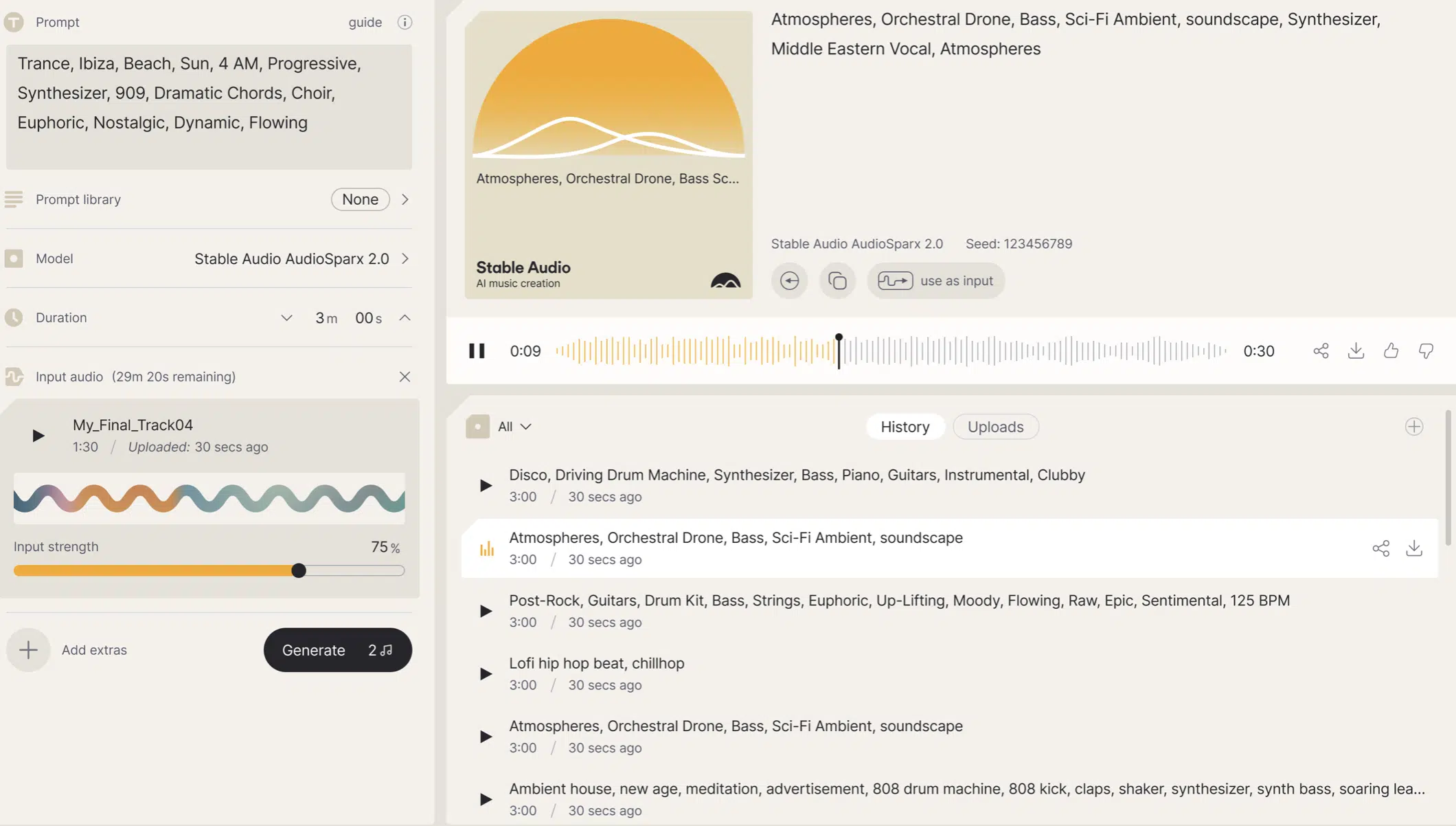

8. Generate Textures With AI-Powered Diffusion Audio Models

AI diffusion models like AudioLDM and Stable Audio use a process called reverse denoising to generate new sounds from pure noise using data-driven learning.

The AI starts with a completely noisy signal and gradually adds structure over multiple steps (kind of like sculpting audio out of chaos).

You can start with a short text prompt like “reversed metallic swirl in a cave” and let the model generate a 12-second clip with over 44.1kHz audio quality and natural stereo spread.

For more accurate results:

- Adjust the sampling steps (try 100–200 steps for denser textures)

- Use a guidance scale between 7–10 for better text-to-audio accuracy

- Set stereo width to 80% for more movement

These kinds of AI-enhanced sound design techniques are perfect when you need unique ambient beds and complex sound effects.

Or, for some unpredictable textures for electronic music, trailers, or layered soundtracks when you really want to get crazy.

9. Use AI To Create Dynamic Convolution Reverb IRs

Instead of using static impulse responses, you can now generate custom convolution reverb IRs with AI that adapt based on your source sound’s tonal profile.

For example, an AI model can take a dry synth pluck peaking at 1kHz and build a reverb tail that highlights that frequency range while attenuating everything above 6kHz with a gentle -3dB roll-off.

Train the model to deliver a 1.3s reverb decay with pre-delay at 45ms and stereo width at 90% — ideal for atmospheric tracks or vocal FX.

Tools like IRIS, or scripting via Python using Librosa and PyTorch, can automate this entire process, adjusting IR shape based on timbre, brightness, and transient sharpness.

It’s one of the most experimental uses of AI-enhanced sound design, which I’m all about, as I’m sure you know.

It lets you create reverb spaces that react to your music in ways traditional audio tools can’t even think about touching.

10. Model Vocal-Like Expressions In Non-Vocal Sounds

Using AI models trained on voice recordings, you can reshape non-vocal audio samples to take on vocal characteristics like:

- Pitch inflection

- Formant shifts

- Breathy modulation

This works especially well with sustained instruments like violins, flutes, or synth leads that can mimic human phrasing when shaped correctly.

For example, applying DDSP’s pitch contour modeling to a dry violin sample lets you simulate a singer’s glide between notes, especially when using vibrato rates between 5–7 Hz and formant shifts around -200 to +300 cents.

It’s a next-level AI-enhanced sound design move when you want your instruments to feel more alive, emotional, and expressive (without needing an actual voice in the mix).

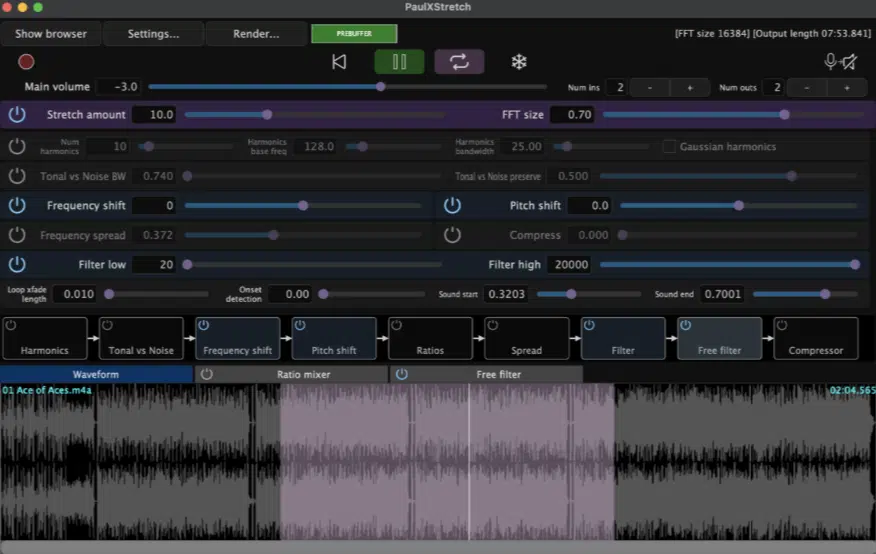

11. Build Fractal Audio Textures From Harmonic Analysis

AI fractalizers can analyze the harmonic fingerprint of a sound and recursively repeat or scale its elements to generate ultra-dense, evolving textures.

For example, like taking a simple reverb tail and letting it regenerate into a full-blown soundscape over 16 bars.

You can use things like PaulXStretch with AI-controlled FFT scaling (typically 4096–8192 window sizes), you can stretch a short 3-second clip up to 30 seconds or more while still preserving tonal structure.

For even more movement, feed that output into an AI-enhanced sound design engine like Emergent Drums or Granulizer 2 with:

- Grain sizes around 80ms

- Random pitch modulation

- Stereo jitter at 50%

These fractal-based sound design techniques give sound designers a powerful way to build immersive atmospheres and ambient tracks.

Or, experimental AI-generated music with infinite variation, so you can really play around all down with endless inspiration.

12. Let AI Detect Sweet Spots In Complex Sound Chains

Certain AI models like timbre transfer networks or dynamic threshold detectors can now scan long FX chains and flag moments of peak sonic interest based on:

- Spectral balance

- RMS dynamics

- Transient behavior

For example, you could run a 2-minute sound through a stacked FX chain and have the AI isolate a 7-second window where the transient-to-harmonic ratio hits an ideal 0.6–0.7.

This could be like EQ (cutting 300Hz by -4dB), bitcrusher (set at 12-bit), phaser (with depth at 60%), chorus, reverb (2.1s decay), and OTT at 50% mix.

These “sweet spots” are perfect for creating soundtracks, cinematic transitions, or textured loops that hit just right without having to scrub through endless audio.

This technique makes the AI-enhanced sound design process way more efficient, especially when you want high-impact results and high-quality audio without wasting creative energy.

13. Apply AI-Driven Macro Mapping For Creative FX Control

Instead of manually assigning macros, AI tools like Ableton’s “Macro Variations” (especially when paired with Max for Live AI devices) or smart parameter grouping in VSTs like Output’s Movement or Portal can intelligently map 3–5 FX controls.

They’re all based on real-time modulation patterns.

For example, an AI model might link filter cutoff (200Hz–4kHz), reverb size (1.8s–3.5s), and delay feedback (30%–70%) into one macro.

All you have to do is tweak the bezier tension curves between 0.2 and 0.9 for smooth, expressive control.

It’ll keep your FX movements feeling musical and alive, with no need for 20 lanes of automation just to get a sound to breathe.

These macros become a sound designer’s go-to technique in AI-enhanced sound design for shaping sound behavior in a way that’s responsive, organic, and on point.

Not to mention way more fun to create with.

14. Resynthesize Vintage Hardware Tone With AI Modeling

By using AI-enhanced sound design, you can recreate the warmth, saturation, and character of vintage gear.

You can do this by training AI models on thousands of audio samples recorded through those exact hardware units (think the SP-1200, Juno-106, or Moog filters).

Tools like IK Multimedia’s ToneX and NAM (Neural Amp Modeler) capture detailed harmonic fingerprints and dynamic response curves.

It lets you apply them directly to clean audio files in your session.

For example, load a ToneX model trained on a Juno’s stereo chorus and apply it to dry synth chords to add analog-style width, along with a +2.5dB bump around 150 Hz and slight flutter movement at ±15 cents.

This technique gives your digital music that vintage flavor and high-quality audio warmth — all without needing access to the actual hardware.

Side note, if you want to learn all about how to emulate analog sound or the best analog emulations for the job, I got you covered.

15. Use AI To Automatically Loop Ambient Beds

Looping ambient textures usually means trimming, fading, and guessing.

Luckily, AI tools like Endlesss, Samplab, and Adobe’s Enhanced Speech engine can auto-detect flawless loop points using:

- Zero crossings

- RMS alignment

- Transient decay curves

Simply set your loop durations anywhere from 8 to 32 bars, and the artificial intelligence will lock in ideal start/end markers while adjusting phase relationships to avoid pops or any annoying phase issues.

You can also enable crossfade smoothing between 10–30 ms and set RMS thresholds around -18dB to make sure the loop flows naturally without drawing attention.

This is a total game-changer for AI-enhanced sound design, especially when you’re building longform ambient beds.

Or when you’re attempting to create a smooth, evolving background noise layer for a soundtrack or visual scene.

16. Transfer Sonic Identity From One Sound To Another

AI timbre transfer lets you impose the sonic identity of one sound onto another…

This means you’re borrowing tone, color, and texture, but keeping the original timing and rhythm intact and on point.

Using tools like Google’s DDSP or SampleRNN, you can blend the harmonic content of a saxophone with the transients of a hi-hat pattern to create a wild new hybrid audio file.

For super clean results, resample both source and target recordings to 16kHz, align envelopes, and normalize RMS levels around -18dB for better tonal balance.

This level of AI-enhanced sound design helps sound designers and composers create music with combinations that were never supposed to exist.

And yeah, the new possibilities here are seriously mind-blowing.

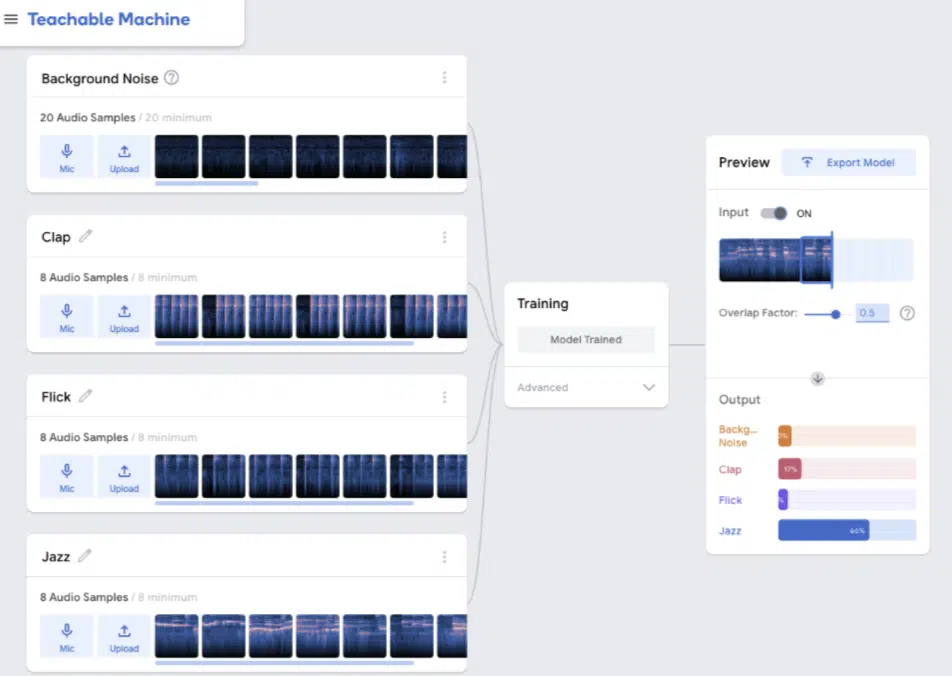

17. Map Sonic Gestures To MIDI With AI Audio Classification

You can use AI-based audio classifiers like Teachable Machine or custom TensorFlow models to train a model to detect specific sonic gestures and convert them into MIDI triggers.

This could be like a swell, impact, or riser.

For example, an AI can detect a low-end boom (40–80 Hz burst lasting 200ms) and trigger a downlifter FX or sidechain automation when it hits.

This helps sound designers create reactive tracks and soundtracks that respond to subtle changes without having to manually automate everything.

It’s a powerful AI-enhanced sound design workflow that’s perfect for automating tedious tasks from your music production process.

It will seriously speed things up without losing audio quality.

Final Thoughts

And there you go: the most epic AI-enhanced sound design tips, tricks, and techniques to take your tracks to the next level.

Now, with this new knowledge, you’ll be able to create sounds that stand out, automate the stuff that used to take hours, and stay way ahead of the curve like a boss.

Plus successfully enhance audio, unlock totally new creative possibilities, and design FX chains, textures, and layers that feel alive and intentional.

This way, you’ll never have to worry about sounding generic or wasting time on tedious tasks again (talk about game-changing).

Remember, it’s all about experimenting, staying curious, and letting AI-enhanced sound design become a tool you lean on.

It’s not a shortcut, but a source of real inspiration.

Just make sure to explore, tweak, and push the limits of what AI-enhanced sound design can do in your workflow because the future of sound is literally in your hands.

Until next time…

Leave a Reply

You must belogged in to post a comment.